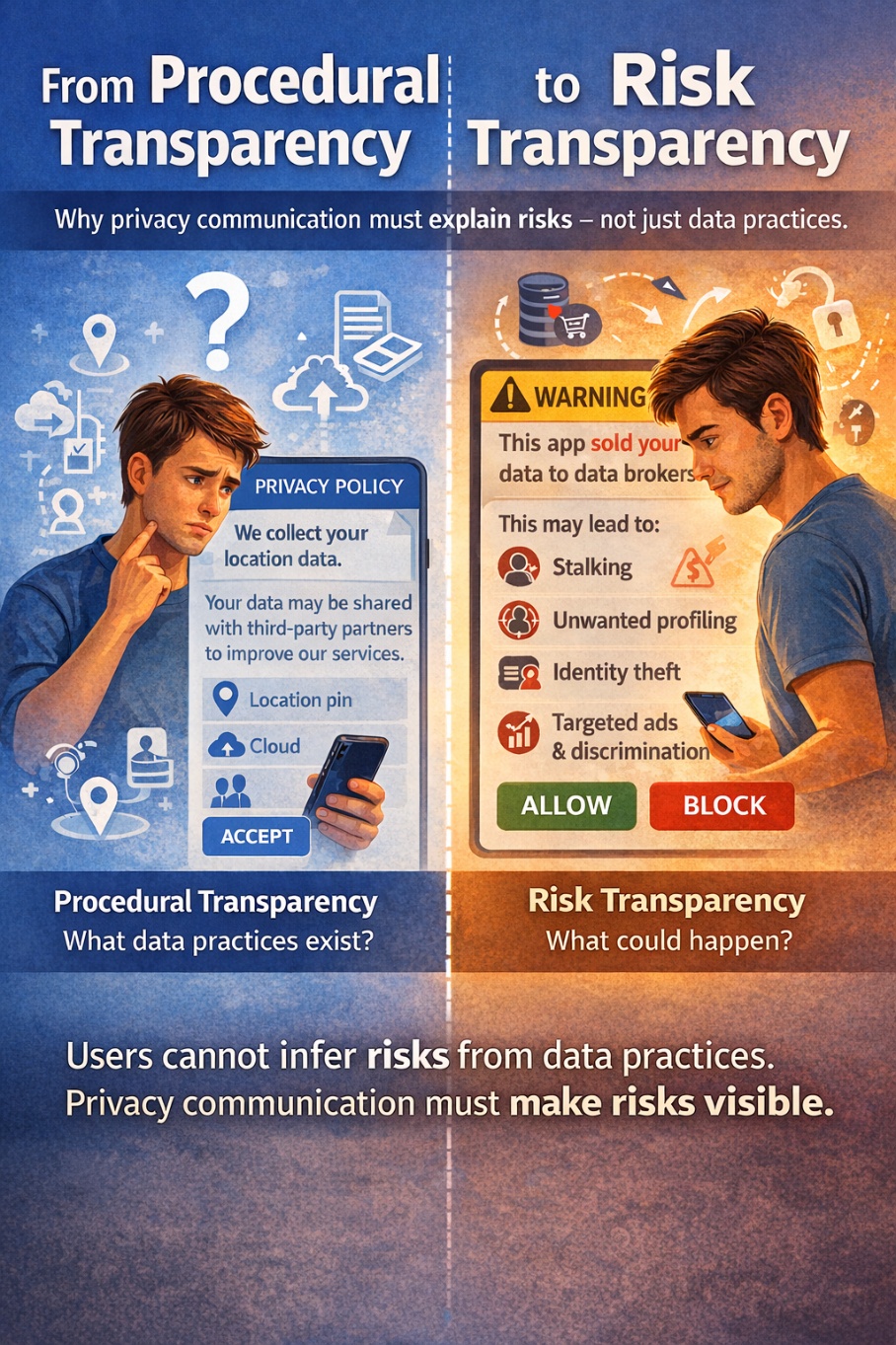

Data privacy is everywhere and yet, for most people, it remains deeply opaque. Cookie banners, privacy policies, and app permissions are meant to inform us, but in reality, they often fail to answer the most important question: What could actually happen to me? This challenge is at the heart of our recent article, “From Procedures to Peril: Towards Risk Transparency in Information Privacy for Users,” now published in Telecommunications Policy.

From Dagstuhl to Publication

The idea for this article originated in January 2025 at the Dagstuhl Seminar “Grand Challenges for Research on Privacy Documents” .The seminar, organized by Florian Schaub (University of Michigan, US), Christine Utz (Radboud University Nijmegen, NL) and Shomir Wilson (Pennsylvania State University, US), brought together an interdisciplinary group from fields such as human-computer interaction, law, natural language processing, and public policy to rethink how privacy documents function in society.

Over the course of the seminar, participants identified a key issue: despite widespread concern about privacy, most users lack the time, expertise, and resources to understand privacy documents leaving them underinformed. Building on these discussions, a group of international researchers continued collaborating over the following year, ultimately developing the perspective piece published today.

The Core Argument: It’s Not About Design, it’s About Content

Much of today’s privacy communication focuses on procedural transparency: explaining what data is collected, how it is processed, and who it is shared with. While legally required, this information is often complex, abstract, and difficult to interpret. Even when users understand it, a crucial gap remains: They are rarely told what risks they actually face.

In a digital environment increasingly shaped by AI and data-driven business models, this gap is becoming more problematic. Personal data is collected, analyzed, and shared at scale – enabling everything from personalization to profiling, and potentially leading to harms such as discrimination, manipulation, or security risks.

Toward Risk Transparency

The article argues for a fundamental shift: from describing what is done with data to communicating what could happen as a result. The idea of risk transparency is well established in other domains. For example, in medicine, patients are not expected to understand biochemical mechanisms; instead, they are informed about side effects and risks in clear and actionable terms.

Applying this to privacy means moving from statements like:

“This app collects your location data”

to something more meaningful:

“Your location data may be shared with third parties and could reveal your daily routines, potentially leading to profiling or misuse.”

Research in risk communication shows that people make better decisions when risks are concrete and contextualized. Early evidence suggests that this also holds true for privacy: highlighting risks can increase awareness and encourage more protective behavior.